Key Takeaways

- Modernization efforts must bridge the gap between agile cloud environments and rigid operational technology.

- "Acquired systems" from mergers and acquisitions pose a significant, often invisible, cryptographic risk.

- The transition to post-quantum standards requires a comprehensive inventory that accounts for infrastructure with decades-long lifecycles.

When we talk about the looming shift toward post-quantum cryptography (PQC), the conversation often drifts toward the exciting stuff. We talk about breaking RSA encryption, the timeline to "Q-Day," or the sci-fi implications of quantum networking. But if you look at the actual scope of what needs to be secured, the reality is much messier. It isn’t just about updating a few servers.

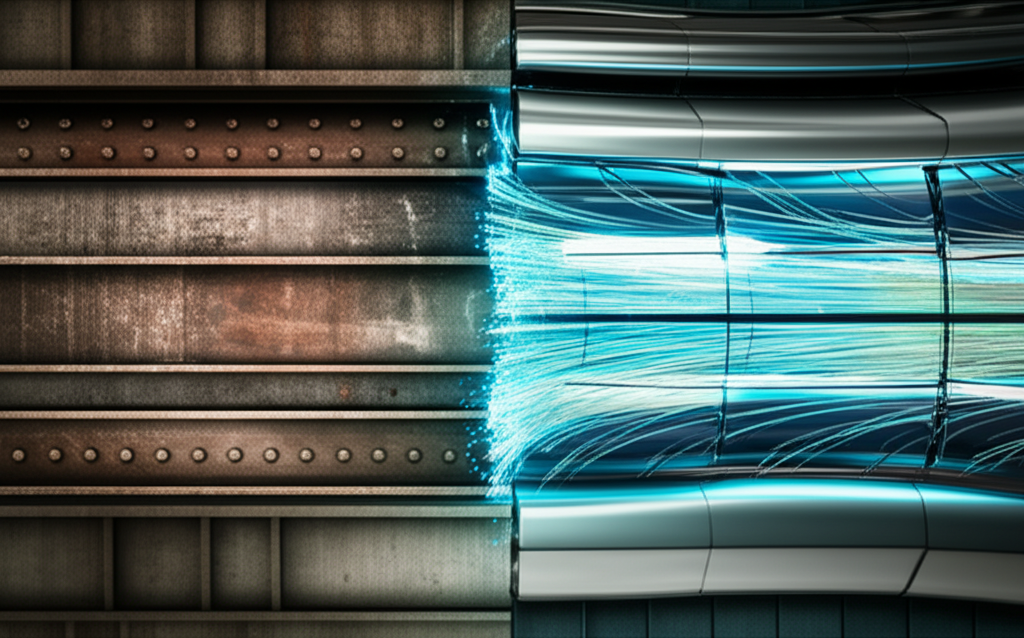

The migration surface is massive. It encompasses everything from agile cloud services to clunky, acquired systems, rigid operational technology, and long-lived infrastructure that was never designed to be patched, let alone upgraded to new cryptographic standards.

It covers quantum computing, quantum networking, and the entire messy history of enterprise IT architecture. And that is where the real problem lies.

The Speed Mismatch

Here’s the thing about modern enterprise architecture: it moves at two different speeds. On one hand, you have cloud services. These are generally managed by hyperscalers or SaaS providers who live and breathe updates. When the new NIST PQC standards are finalized and rolled out, your cloud provider will likely handle the heavy lifting for the platform layer. You might need to update some API calls or manage your own certificates, but the infrastructure itself is pliable.

Then, you have the other side of the coin. The "long-lived infrastructure."

We are talking about systems that were deployed ten, fifteen, or even twenty years ago. In the world of Operational Technology (OT)—think power grids, manufacturing plants, water treatment facilities—longevity is a feature, not a bug. These systems are designed to run uninterrupted for decades. They use proprietary protocols, run on outdated real-time operating systems, and often rely on hard-coded cryptographic keys that can’t simply be swapped out via an over-the-air update.

Trying to shove a post-quantum algorithm, which might have different key sizes or performance characteristics, into a logic controller from 2005 is like trying to fit a jet engine into a vintage sedan. It might fit, but the chassis is going to rattle apart.

The "Acquired Systems" Blind Spot

Another layer of complexity comes from the business side of things. Most large organizations today are Frankensteins of past mergers and acquisitions. When a company buys a competitor, they aren't just acquiring revenue streams and customer lists; they are acquiring technical debt.

These "acquired systems" are often the most dangerous part of the network during a cryptographic migration. Why? Because the current IT team often doesn’t know what’s inside them. Documentation gets lost during the handover. The original developers left three years ago. The source code might be compiled and sitting in a repository no one has access to.

If that acquired system relies on a specific legacy encryption standard to talk to the main database, and you upgrade the main database to be quantum-resistant, you break the connection. Suddenly, a critical business process halts, and nobody knows why because the system involved was "acquired" in 2014 and just worked until today.

It’s Not Just Defense

While much of the focus is on defense—protecting data from future quantum computers—the scope of this transition also looks forward. The guidance and planning happening now isn't purely reactive. It covers quantum computing and quantum networking as enabling technologies, too.

As organizations build out new infrastructure, they have to think about "crypto-agility." This is the ability to swap out encryption algorithms without rewriting the entire application stack. If you are laying fiber or launching satellites (classic long-lived infrastructure) today, you have to assume that the cryptographic standards you use now will be obsolete long before the hardware fails.

If you don't build in the capacity for quantum networking or PQC upgrades now, you are effectively building immediate obsolescence into expensive assets.

The Inventory Nightmare

So, what is the immediate takeaway for CIOs and CISOs? It comes down to visibility. You cannot migrate what you cannot see.

Most organizations have a decent handle on their cloud footprint. But ask them for a detailed inventory of every piece of operational technology and every black-box system acquired over the last decade, specifically detailing the cryptographic libraries they use? Crickets.

This is why the transition is going to be a marathon. The math is the easy part—mathematicians and standards bodies are handling that. The hard part is the archaeology required to dig through layers of corporate sediment to find every instance of RSA-2048 or ECC hidden in the basement of the enterprise.

We are moving toward a world where the security of data depends on the weakest link. In many cases, that link won't be the cutting-edge cloud service. It will be the forgotten server in the closet, the acquired system running payroll for the subsidiary, or the HVAC controller connected to the network.

Securing the future requires cleaning up the past. And that is going to take a lot more than a software patch.

⬇️

⬇️