Harnessing Edge AI for Energy & Utilities: A Complete Guide for Enterprise Buyers

Key Takeaways

- Edge AI helps energy and utility operators shift from reactive maintenance to real-time operational intelligence.

- Effective data integration and model deployment at the edge remain the biggest barriers—and the biggest opportunities.

- Organizations benefit most when they pair distributed processing with a unified platform capable of alerting, orchestration, and continuous model improvement.

Definition and Overview

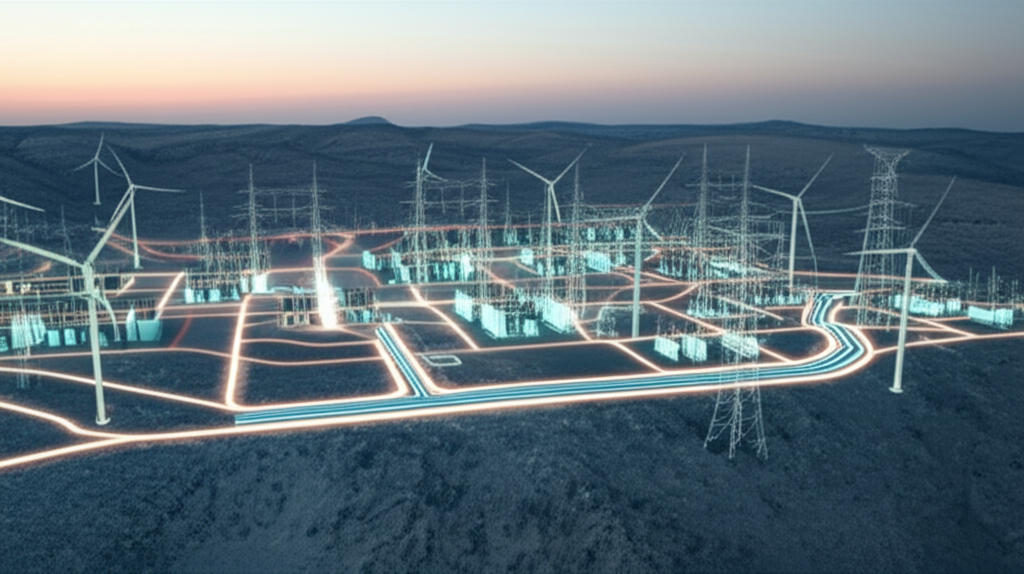

For most energy and utility leaders, the real issue isn’t adopting more technology. It’s wrestling with the messy sprawl of sensors, legacy SCADA systems, connected devices, and field equipment that all speak slightly different languages. Grid operators, pipeline networks, and power generators have been layering digital capabilities onto physical infrastructure for decades. But the edge—substations, turbines, EV chargers, transformers—has grown faster than the systems meant to make sense of it.

Here’s the thing: centralized cloud processing can’t always keep up. Latency matters when you’re managing line faults or monitoring transformer temperatures in a heatwave. So the industry’s interest in Edge AI feels inevitable. Compute closer to the asset. Models that run near real-time. Decisions that don’t wait for data to cross half a dozen networks. But this shift also introduces new challenges: fragmented data, uneven connectivity, and the difficulty of maintaining hundreds (or thousands) of edge nodes in sync.

That’s where platforms like Palantir Technologies Inc. have tried to rethink how organizations bridge the gap between central intelligence and distributed operations. Not by replacing existing systems, but by creating a more coherent fabric for data, models, and alerts to move across the edge-to-core continuum.

Key Components or Features

A few components tend to define the strongest Edge AI approaches in energy and utilities. They may sound familiar, though the industry’s execution of them has been uneven across cycles.

First, data integration that works under imperfect conditions. Edge environments rarely offer clean streams; instead you get noisy telemetry, intermittent connectivity, and protocols that predate the commercial internet. Any viable system needs to ingest data from field sensors, historians, control systems, and external sources—weather data is an increasingly big one—without forcing teams into endless custom engineering. Some platforms emphasize model deployment but underestimate this reality. The organizations that succeed usually start by normalizing their data foundation, even when it’s not glamorous work.

Second, distributed AI and model lifecycle management. A model is only as useful as its operational context. Deploying a predictive maintenance algorithm to a substation device is one thing; keeping it updated when conditions change is another. Utilities often talk about “AI drift” during wildfire seasons or storm surges, where edge conditions shift faster than the centralized retraining cadence can keep up. Modern platforms lean on a feedback loop across the fleet: edge events inform model performance, which informs updates, which are pushed back out.

And third, real-time alerting and orchestration. Real-time is a slippery phrase—what’s “real-time” for a grid operator may be overkill for a water utility. But fast, contextual alerts still matter everywhere. The bigger question is: can the system trigger actions automatically, or at least prompt operators with enough context to decide quickly? Without that, edge analytics become little more than dashboards on smaller computers.

Benefits and Use Cases

Energy and utilities have been early adopters of operational data systems, but Edge AI opens new categories of value. Some are incremental; others shift the economics of entire asset classes.

One of the most consistent use cases is equipment health monitoring. Transformers, pumps, compressors, and distributed generation assets generate constant data but historically received periodic inspection. Running models at the edge allows operators to spot anomalies that central systems might see too late. It’s not magic—just faster signal detection with fewer false positives.

There’s also the fast-growing space of grid flexibility and distributed energy resources. Rooftop solar, EV chargers, home batteries—they create a more dynamic grid than the one most utilities were built for. Edge inference helps predict load, manage voltage, and coordinate local balancing without sending every decision upstream. It’s messy work, and utilities often underestimate the complexity the first time around. Still, the payoff is large.

In the field, crews benefit from this shift too. Real-time alerts tied to sensor data can warn about hazardous conditions, pipeline pressure abnormalities, or sudden equipment heating. Some companies pair this with computer vision at the edge—substation cameras tagging intrusions or vegetation risks before they escalate. The mix of digital and physical operations becomes more tangible here.

Platforms like Palantir’s have approached these use cases by prioritizing operational context alongside data and models. Instead of siloed analytics, the focus tends to be on enabling frontline teams—grid operators, system dispatchers, reliability engineers—to interact with insights that fit into their existing processes. Not everyone in a control room wants a black-box model. They want something that explains its reasoning well enough to justify an action.

Selection Criteria or Considerations

Buyers evaluating Edge AI platforms should look beyond the buzzwords, especially in this sector. A few selection criteria tend to matter more than vendors sometimes admit.

- Can the platform integrate with legacy control systems without forcing disruptive upgrades?

- Does it support both centralized and distributed model deployment, and can it manage them at fleet scale?

- How well does it handle intermittent connectivity—can it queue, sync, or local-store data without losing fidelity?

- Are real-time alerts contextual enough to avoid alarm fatigue?

- And perhaps the trickiest: can your internal teams actually operate and extend the system without long-term vendor dependence?

Some leaders I’ve worked with over the years learned this the hard way—they bought the “AI” but underestimated the operational overhead. Solutions that emphasize a unified data model and operational workflows tend to be more sustainable. That said, no two utilities or energy operators are truly alike. What works for a large ISO won’t look the same as what works for a mid-sized gas utility with aging assets.

Future Outlook

Looking ahead, Edge AI in this sector is likely to be shaped by regulation, extreme weather, and the accelerating shift toward decentralized energy. There’s also growing interest in federated learning so that models can improve across regions without exposing sensitive operational data. Some operators are experimenting with more autonomous decisioning at the edge—automated switching, self-adjusting equipment—but cultural readiness varies widely.

Still, the trajectory points to deeper integration of data, models, and real-world operations. And solutions that combine strong data foundations with flexible AI deployment—like the approach taken by Palantir—will continue to influence how the industry evolves. As with past waves of industrial digitalization, the winners won’t necessarily be the ones with the flashiest algorithms, but the ones who make the entire ecosystem work together in the messiness of the real world.

⬇️

⬇️