Key Takeaways

- Data Sovereignty is Critical: Organizations are moving away from centralized, one-size-fits-all models toward "sovereign" infrastructure that keeps data within specific borders or control frameworks.

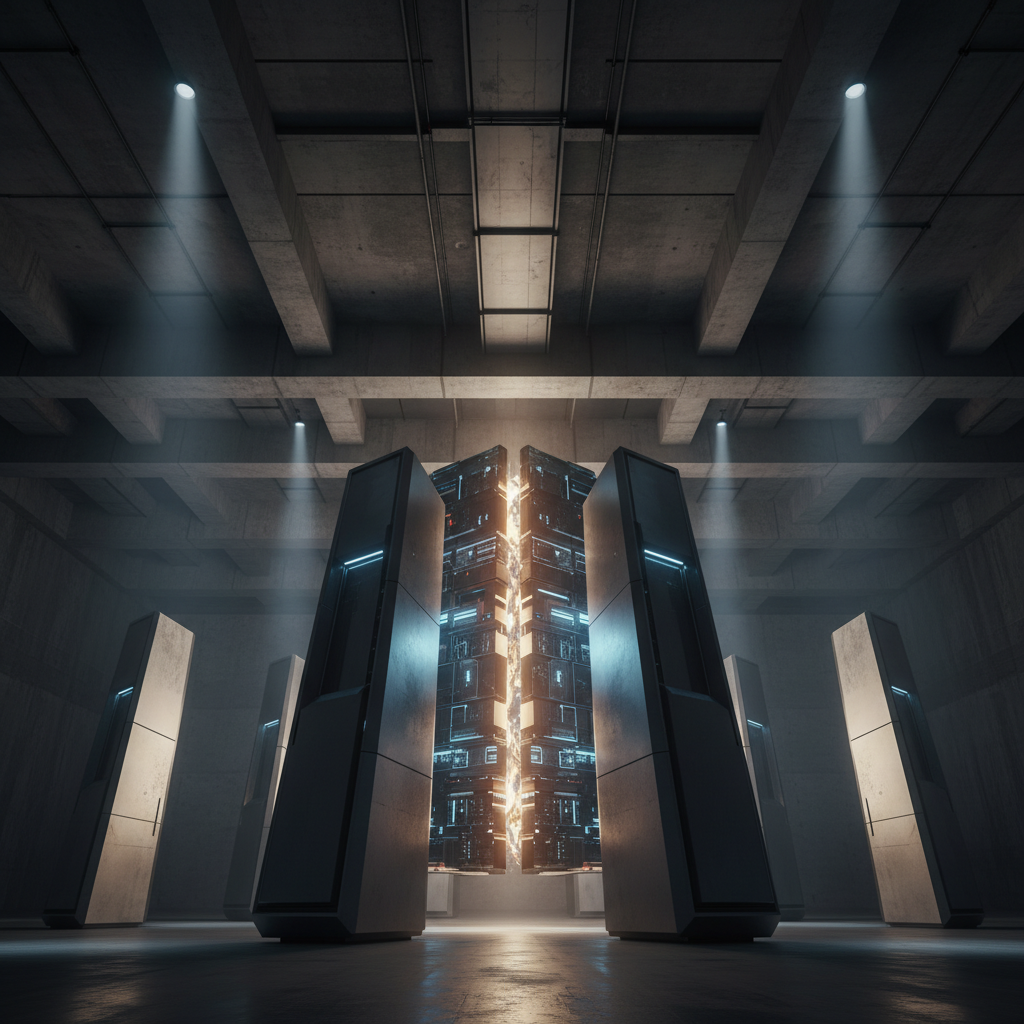

- Infrastructure Shifts: The rise of AI inferencing requires specialized, high-density data centers capable of supporting massive long-term compute loads.

- Strategic Stability: Long-term partnerships between facility owners and specialized AI providers are becoming the standard for ensuring reliable uptime and capacity.

In the rapid rush to deploy artificial intelligence, a quiet revolution is happening in the concrete and steel structures that house the internet. We used to think of the cloud as this nebulous, floating entity. It’s not. It lives in very specific zip codes, drawing massive amounts of power, and increasingly, it is being partitioned by borders.

Welcome to the era of Sovereign AI.

This isn't just about politics or compliance, though those play a huge role. It is about physics and latency. As businesses move from "playing around" with AI to actually running it (inferencing) at scale, the physical location of the compute power matters more than ever. Recent industry movements highlight this shift; for instance, significant real estate transactions are occurring where the acquired data center is reportedly primarily leased on a long-term basis to a “leading provider of sovereign AI and cloud inferencing solutions.”

That’s a mouthful, right? But it signals a massive change in how enterprise IT needs to think about buying and deploying AI services.

Definition and Overview

So, what exactly are we talking about here?

Sovereign AI refers to the capacity of a nation, or a specific enterprise entity, to produce artificial intelligence using its own infrastructure, data, workforce, and business networks. It’s the antithesis of the "black box" model where you send your data to a generic API hosted on a server you can't locate, to be processed by a model you don't control.

Cloud Inferencing, on the other hand, is the workhorse phase of AI. Training is when the AI goes to school; inferencing is when it goes to work. When you type a query into a chatbot and get an answer, that’s inferencing.

Combining these two creates a category of technology infrastructure focused on running live AI workloads in a secure, compliant, and often geographically specific environment.

Here's the thing. For years, the trend was centralization. Now, we are seeing a necessary fragmentation. Why? Because a bank in Frankfurt cannot legally or ethically pipe its customer data to a server farm in Virginia for processing. They need sovereign capability.

Key Components and Features

This category isn't defined by just one piece of software. It’s a stack.

1. Specialized Infrastructure:

Standard cloud servers often struggle with the thermal and power demands of modern AI. Sovereign AI providers typically rely on data centers built for high-density computing. These facilities are designed to handle the massive heat generated by continuous inferencing.

2. The "Sovereign" Cloud Layer:

This is the software environment. It guarantees data residency, ensuring that input data and the resulting inference never leave a specific jurisdiction.

3. Localized Models:

Often, these solutions utilize foundation models that have been fine-tuned for local languages, cultural nuances, or specific industry regulations (like HIPAA in healthcare or GDPR in Europe).

4. Long-Term Capacity Assurance:

You can't build a business strategy on spot-pricing compute that might disappear tomorrow. The providers in this space secure their footing through stability. As noted in recent market moves, facilities are often leased on a long-term basis to leading providers. This ensures that when an enterprise signs a contract, the hardware backing it isn't going anywhere.

Benefits and Use Cases

Why go this route? Why not just use the biggest, cheapest public cloud available?

Regulatory Immunity

The landscape of digital law is getting messy. Governments are increasingly asserting "digital sovereignty." By utilizing a provider focused on sovereign AI, businesses insulate themselves from cross-border data transfer risks. If the data never leaves the country, it can't be intercepted or subpoenaed by a foreign entity.

Reduced Latency

Speed kills. Or rather, the lack of it does. For applications like autonomous driving, fraud detection, or real-time language translation, the physical distance between the user and the inference engine matters. Sovereign AI providers often distribute nodes closer to the edge, or within specific regional hubs, drastically cutting down response times.

Predictable Performance

Public clouds can be noisy neighbors. If everyone is hitting the same GPU cluster, performance can wobble. Dedicated sovereign solutions, supported by those long-term infrastructure leases, often offer more consistent throughput for heavy inferencing workloads.

Selection Criteria and Considerations

Choosing a partner in this space is different from swiping a credit card for a SaaS subscription. It’s an infrastructure decision.

Check the "Real Estate" Strategy

It sounds boring, but look at the provider's physical footprint. Do they have long-term control over their data centers? You want a partner who is anchored. The fact that major providers are securing long-term leases on acquired data centers is a green flag—it shows financial health and operational stability.

Compliance Credentials

Does the provider just say they keep data local, or is the hardware physically incapable of routing it elsewhere? Ask for the architecture diagrams.

The Ecosystem Fit

Does their inferencing solution play nice with your existing tech stack? You don't want to re-architect your entire data lake just to run a model. The best providers act as a secure extension of your current environment, not a walled garden.

Future Outlook

We are moving toward a "multiverse" of clouds.

The idea of one single internet running on one single cloud is dead. The future is a federation of sovereign clouds, all talking to each other but maintaining strict borders.

Demand for AI inferencing is projected to outpace demand for training within the next few years. As that flips, the providers who have secured the best data center real estate and established the most robust sovereign frameworks will win.

For the enterprise buyer, the message is clear: Align with providers who aren't just renting space by the hour, but who are putting down roots. The "leading provider" mentioned in recent reports isn't just buying capacity; they are buying certainty. And in the AI era, certainty is the most valuable asset of all.

⬇️

⬇️